About my academic research

My post-doc project in Dr. Jonathan Brennan's Computational Neurolinguistics Lab at the University of Michigan involved taking EEG recordings from interlocutors in unscripted conversation to build a neurolinguistic corpus of naturalistic native and second language speech. In a subsequent step, we tested competing models of L1 and L2 grammar processing to see which theories better align with the observed brainwaves. This work was supported by National Science Foundation SPRF Postdoctoral Fellowship award number 2203723.

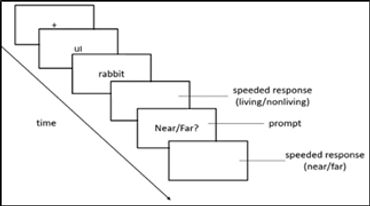

My PhD dissertation at the University of Illinois - Chicago in Dr. Kara Morgan-Short's Cognition of Second Language Acquisition lab used machine learning to pull apart neural indices of conscious vs. subconscious L2 processing. In my experiment, participants were exposed to an artificial language with a hidden grammar pattern. By triangulating language task reaction times, subjective reports, and EEG data, I tested whether focusing on a grammar rule can help or hinder acquisition at a more implicit level. The long game is to find an optimal trade-off between form-vs. meaning-based approaches to language learning, asking questions such as: can L2 learners deliberately change how they allocate attention during real-time L2 processing to improve acquisition? The cherry on top would be to tailor these recommendations according to individuals' particular profiles (which may vary along dimensions of working memory, executive control, sheer tolerance for boredom during repetitive practice, etc.). This was supported by National Science Foundation Dissertation Improvement Grant award number 1941189 as well as a Dingwall Foundation Dissertation Fellowship in the Cognitive, Clinical, and Neural Foundations of Language, an American Philosophical Society John Hope Franklin Dissertation Fellowship, and an Institutes for Citizens & Scholars (formerly Woodrow Wilson National Fellowship Foundation)/Mellon Mays Fellowship Travel and Research Grant.

I also performed research on how grammar learning can be conceived as the gradual acquisition of recurring statistical patterns in L2 input, such that grammatical constructions emerge gradually as the result of processes of abstraction over individual exemplars of a particular form. Language educators could facilitate this process by manipulating factors like token frequency ("How often do you encounter this particular form?"), type frequency ("How many different candidate forms do you see in this particular context?"), and input skewedness ("For this context, to what extent does one or a few forms make up the lion's share of the input?"). Beyond informing psycholinguistic theory, this answers practical praxis questions such as: does encountering a form more frequently necessarily make it easier to recognize and produce? Is it better to teach new grammar by using a wide variety of words in the examples, or by sticking to a few familiar vocabulary items? Should teachers try to emulate the input frequencies encountered “in the real world,” or is it possible to tailor classroom input to facilitate L2 acquisition?

I've also dipped my toes into corpus research with projects like:

- profiling the richness of language learner vocabulary through word frequency analyses of written essays

- analyses of how semantic word properties affect development of Spanish dative constructions across L2 writers at different proficiency levels

- comparisons of heritage vs. native speaker collocations in Spanish dative clitics

Check out some recorded conference presentations below!

Photo Gallery

Presentations

Cognitive Science Society 2021 conference recording

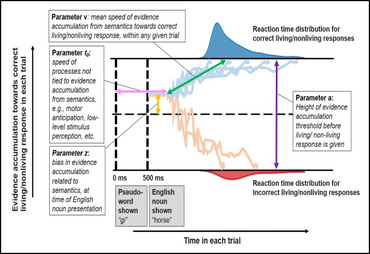

Drift diffusion modeling of reaction times in an artificial language experiment shows that apparent learning effects in participants without conscious awareness of an underlying rule were underlyingly driven by motor adaptation to predictable button-press sequences, whereas rule-aware learners showed both motor adaptation and sensitivity to noun semantics

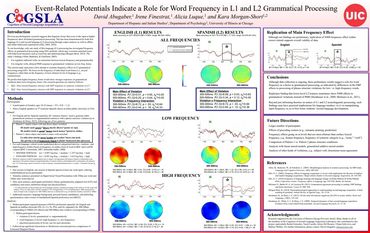

Cognitive Neuroscience Society 2021 conference recording

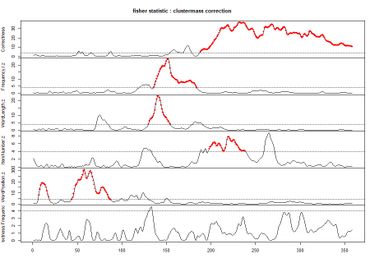

Mass univariate analyses on EEG support dual-route models of morphosyntax processing (in other words, your brain composes "eat + s" instead of memorizing "eats" as one word)

American Association of Applied Linguistics 2021 conference recording

Language learners with conscious awareness of a grammar rule perform similarly (with some caveats) no matter if they were told the rule or if they figured it out themselves:

Association for Psychological Science 2020 conference recording

Language learners with/without conscious awareness of a grammar rule that they've learned have differently-shaped reaction time distributions, hinting at different underlying cognitive processes

XV International Symposium of Psycholinguistics 2021 conference recording

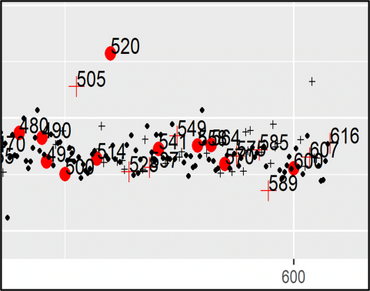

Assessing individual neurocognitive differences in native language morphosyntactic processing along an N400-P600 ERP continuum

Presented by first author and ex-labmate Dr. Irene Finestrat :)

CUNY Conference on Human Sentence Processing 2020

Do first language neural processes for morphosyntax transfer to the second language?

Presented by first author Dr. Irene Finestrat

Cognitive Neuroscience Society 2021

Neural oscillations measured via EEG as predictors of variability in second language learning

Yay resting state meditation!

Presented by first author, CogSLA undergraduate research assistant, and pre-medical student extraordinaire Victoria Ogunniyi

Society for the Neurobiology of Language 2020

Examining individual variability in Event Related Potential responses and expanding the evidence to the second language

Presented by first author Dr. Irene Finestrat

2014 Gates Cambridge internal symposium talk

New (as of 7 years ago) experimental methods in second language learning research

The methods have only gotten better since then, y'all! Machine learning-based brain decoding leaves univariate EEG and fMRI eating its dust.

PhD dissertation defense

From May 2022, titled "Disentangling neural indices of implicit vs. explicit morphosyntax processing in an artificial language."